When Artificial Intelligence Starts to Rewrite Reality – Healthcare Blog

Author: Brian Johndev

Artificial intelligence is quickly becoming a core part of healthcare operations. It drafts clinical notes, summarizes patient encounters, flags abnormal labs, triages messages, reviews images, aids in prior authorization, and increasingly guides decision support. Artificial intelligence is no longer just a side experiment in medicine; it is becoming a key interpreter of clinical reality.

This raises an important question for doctors, managers, and policymakers: Does artificial intelligence accurately reflect the real world? Or reinvent it subtly?

The data is simple. According to July 2023 estimates from the U.S. Census Bureau, about 75% of Americans identify themselves as white (including Hispanic and non-Hispanic), about 14% as black or African American, about 6% as Asian, and smaller proportions as Native American, Pacific Islander, or multiracial. Hispanics or Latinos (who can be of any race) make up about 19% of the population.

In short, data is measurable, verifiable and publicly accessible.

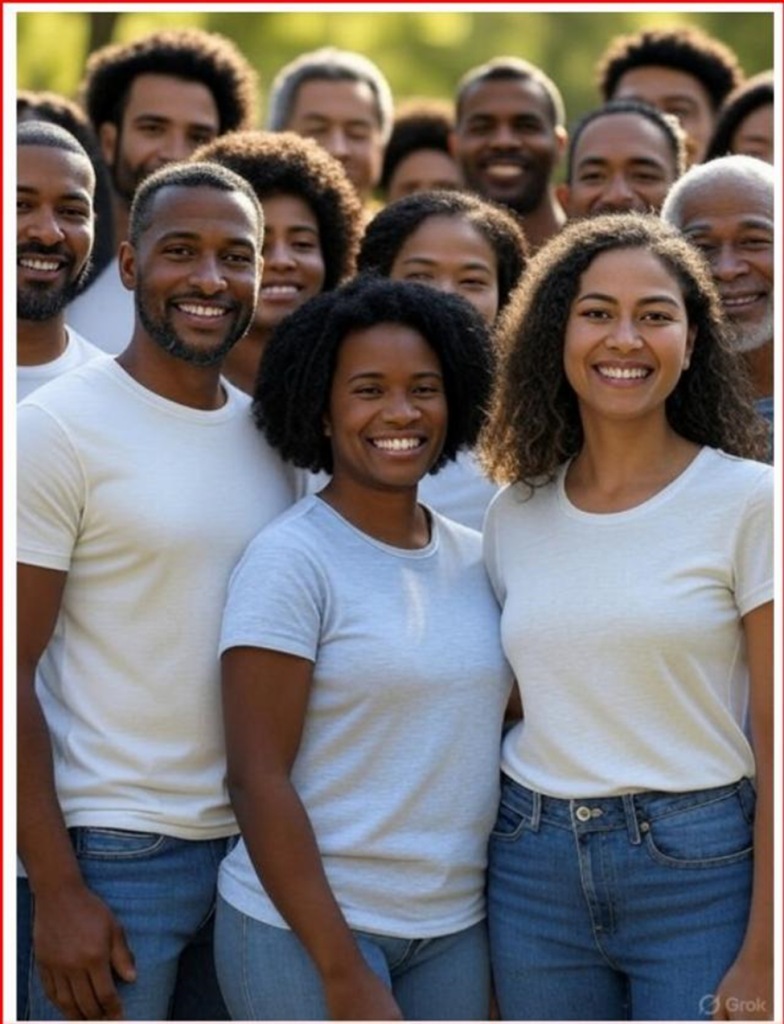

I recently conducted a simple experiment that has implications beyond image creation. I asked two of the top AI image generation platforms to create a group photo that reflects the racial makeup of the U.S. population based on official census data.

The first system I tested was the Grok 3. When asked to generate an accurate demographic image based on census data, the results only showed black individuals—a complete departure from reality.

After more prompts, later images showed more diversity, but white people were still underrepresented compared to their share of the population.

When asked, the system acknowledged that image-generating models may prioritize diversity or aim to address historical underrepresentation in their results.

In other words, the model does not strictly mirror the data. It's changing representation.

For comparison, I ran the same prompt through ChatGPT 5.0. The output is closer to census proportions but still requires adjustments, the final image is below. When asked, the system explained that image models may prioritize visual diversity unless very specific demographic instructions are given.

This little experiment highlights a larger problem. When an AI system is explicitly told to reflect official population data but ends up producing an adjusted version of society, it’s not just a technical glitch. It shows design choices—decisions about how a model balances representation goals and the need for statistical accuracy.

This tension is particularly important in the medical field.

Healthcare is currently engaged in an active debate over the role of race in clinical algorithms. In recent years, professional societies and academic centers have reexamined race-adjusted eGFR calculations, pulmonary function test reference values, and obstetric risk scoring tools. Critics argue that using race as a biological indicator could exacerbate inequality. Others warn that removing variables without accounting for underlying epidemiology could harm the accuracy of predictions.

These debates are complex and nuanced, but they share a core principle: clinical tools must be transparent about which variables are included, why they were selected, and how they affect outcomes.

Artificial intelligence adds new levels of opacity.

Predictive models now support hospital readmission planning, sepsis alerts, imaging prioritization and population health outreach. Large language models are being incorporated into electronic health records to summarize notes and recommend management plans. Machine learning systems are trained on massive data sets that inevitably reflect historical practice patterns, population distributions, and embedded biases.

The concern is not that AI will deliberately pursue ideological goals. AI systems lack consciousness. At least for now. However, they are trained on data sets created by humans, filtered through algorithms developed by humans, and guided by guardrails set by humans. These upstream design choices impact subsequent output. Garbage in, garbage out.

If image generation tools “rebalance” demographics to promote diversity, it is reasonable to ask whether clinical AI tools might also tailor their output to pursue other goals, such as equity metrics, institutional benchmarks, regulatory incentives, or financial constraints, even if unintentionally.

Consider predictive risk modeling. Clinicians may receive misleading signals if algorithms systematically adjust output thresholds to avoid different impact statistics, rather than accurately reflecting observed risk. If triage models are optimized to balance resource allocation metrics without appropriate clinical validation, patients may face unintended harm.

Medical accuracy is not superficial. This is inevitable.

Disease prevalence varies across populations due to genetic, environmental, behavioral and socioeconomic factors. For example, rates of hypertension, diabetes, glaucoma, sickle cell disease, and certain cancers vary significantly among different population groups. These differences are epidemiological facts, not value judgments. Ignoring or smoothing symmetries in order to characterize them may impair clinical accuracy.

None of this is against addressing health care inequalities. Rather, identifying differences requires accurate and thorough data. If AI tools blur distinctions without being transparent in the name of fairness, they may paradoxically make disparities more difficult to identify and resolve.

The solution is not to oppose the integration of AI into medicine. The advantages are significant. In the field of ophthalmology, AI-assisted retinal image analysis has shown high sensitivity and specificity in detecting diabetic retinopathy.

In radiology, machine learning tools can highlight subtle findings that might otherwise go unnoticed. Clinical documentation support can help reduce burnout by reducing the amount of paperwork.

The promise is real. But so does responsibility.

Health systems adopting AI tools should require transparency in model development, variable importance, and output adjustment policies. Developers should reveal whether population balance or representation changes are integrated into the training or inference process.

Regulators should focus on interpretability standards so that clinicians can understand not only what the algorithm recommends, but also how the algorithm arrived at those conclusions.

In healthcare, transparency is not optional; it is critical for clinical accuracy and building trust.

Patients felt that recommendations were based on evidence and clinical judgment. If AI serves as an intermediary between clinicians and patients by summarizing records, proposing diagnoses, and stratifying risks, then its output must reflect empirical reality as faithfully as possible. Otherwise, medicine may shift from evidence-based practice to narrative-driven analysis.

Artificial intelligence has huge potential to improve care delivery, increase access and increase diagnostic accuracy. However, its credibility depends on its consistency with verifiable facts. Trust declines when algorithms begin to represent the world not just as it is observed, but as its creators think it should be represented.

Medicine cannot withstand this erosion.

Data-driven care relies on data fidelity. If reality becomes fluid, so will trust. In healthcare, trust is not a luxury. It is the foundation upon which everything else rests.

Brian C. Joondeph, MD, is a Colorado ophthalmologist and retina specialist. He writes regularly on Dr. Brian's Substack about artificial intelligence, medical ethics, and the future of physician practice.

![How to build an effective referral network and improve patient navigation [Video] How to build an effective referral network and improve patient navigation [Video]](https://i0.wp.com/medcitynews.com/wp-content/uploads/sites/7/2026/03/BROLL.00_00_06_04.Still005-1024x576.png?w=390&resize=390,220&ssl=1)